【云原生】Kubernetes集群安装和配置之节点初始化(master和node)

Kubernetes集群安装和配置02

- 一、初始化master

- 1.1、拉取相关镜像。

- 1.2、初始化集群

- 1.3、添加pod网络组件

- 1.3.1、方法一:

- 1.3.2、方法二

- 1.4、开启kube-proxy的ipvs模式

- 二、Node节点初始化

- 2.1、环境安装

- 2.2、节点环境修改

- 2.3、将节点添加到集群

- 三、重置节点

- 总结

一、初始化master

(1)生成默认配置文件。

kubeadm config print init-defaults > init.default.yaml

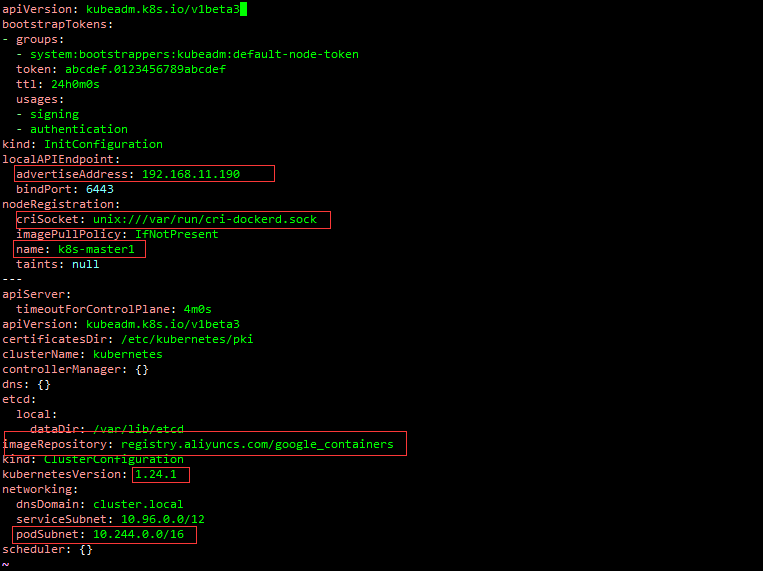

(2)修改配置文件。

[

# 修改地址 节点IP地址

localAPIEndpoint.advertiseAddress: 192.168.11.190

# 修改套接字

nodeRegistration.criSocket: unix:///var/run/cri-dockerd.sock

# 修改节点名称

nodeRegistration.name: k8s-master1

# 修改镜像仓库地址为国内开源镜像库

imageRepository: registry.aliyuncs.com/google_containers

# 修改版本号

kubernetesVersion: 1.24.1

# 增加podSubnet,由于后续会安装flannel 网络插件,该插件必须在集群初始化时指定pod地址

# 10.244.0.0/16 为flannel组件podSubnet默认值,集群配置与网络组件中的配置需保持一致

networking.podSubnet: 10.244.0.0/16

1.1、拉取相关镜像。

sudo kubeadm config images pull --config=init.default.yaml

如果出现failed to pull image “registry.aliyuncs.com/google_containers/kube-apiserver:v1.24.1”: output: E0317 08:32:39.321814问题,完整错误如下:

fly@fly:~$ sudo kubeadm config images pull --config=init.default.yaml

failed to pull image "registry.aliyuncs.com/google_containers/kube-apiserver:v1.24.1": output: E0317 08:32:39.321814 14391 remote_image.go:171] "PullImage from image service failed" err="rpc error: code = Unavailable desc = connection error: desc = \"transport: Error while dialing dial unix /var/run/cri-dockerd.sock: connect: connection refused\"" image="registry.aliyuncs.com/google_containers/kube-apiserver:v1.24.1"

time="2023-03-17T08:32:39Z" level=fatal msg="pulling image: rpc error: code = Unavailable desc = connection error: desc = \"transport: Error while dialing dial unix /var/run/cri-dockerd.sock: connect: connection refused\""

, error: exit status 1

To see the stack trace of this error execute with --v=5 or higher提示拉取镜像失败,使用 kubeadm config images list --config kubeadm.yml 命令查询需要下载的镜像。

fly@fly:~$ kubeadm config images list --config init.default.yaml

registry.aliyuncs.com/google_containers/kube-apiserver:v1.24.1

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.24.1

registry.aliyuncs.com/google_containers/kube-scheduler:v1.24.1

registry.aliyuncs.com/google_containers/kube-proxy:v1.24.1

registry.aliyuncs.com/google_containers/pause:3.7

registry.aliyuncs.com/google_containers/etcd:3.5.3-0

registry.aliyuncs.com/google_containers/coredns:v1.8.6原因是:docker版本和cri-dockerd版本间不兼容,更新其中一个比较低的版本。我使用的是docker v20.10.21、cri-dockerd是0.3.1。

1.2、初始化集群

通过配置文件初始化,建议选择配置文件初始化。

# 通过配置文件初始化,建议选择配置文件初始化

sudo kubeadm init --config=init.default.yaml

# 通过参数初始化

kubeadm init --image-repository registry.aliyuncs.com/google_containers --kubernetes-version=v1.24.1 --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.11.109 --cri-socket unix:///var/run/cri-dockerd.sock

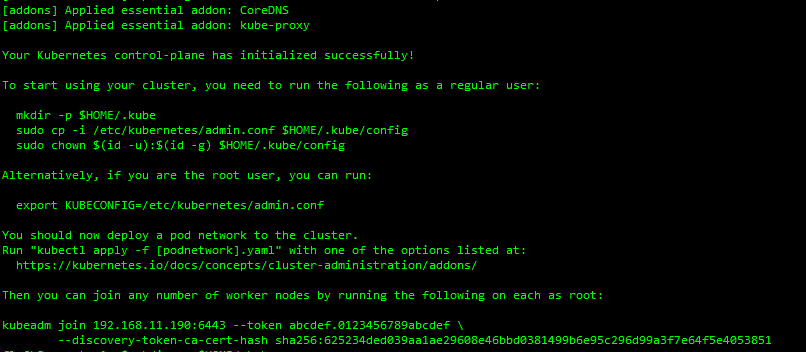

初始化成功之后,返回以下内容,按提示执行即可:

当前用户为普通用户,请执行以下指令:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

当前用户为root用户,请配置环境变量:

# /etc/profile 末尾添加环境变量

export KUBECONFIG=/etc/kubernetes/admin.conf

# 执行命令,立即生效

source /etc/profile

注意:以上两种情况二选一。

添加节点到集群命令,注意:需要指定 --cri-socket unix:///var/run/cri-dockerd.sock。比如:

kubeadm join 192.168.11.190:6443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:625234ded039aa1ae29608e46bbd0381499b6e95c296d99a3f7e64f5e4053851 --cri-socket unix:///var/run/cri-dockerd.sockkubeadm join 192.168.11.190:6443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:ce8db5a985c3c9739f3f0d187c5675d9c5f05a2994dbbde33324f2b93c66048e --cri-socket unix:///var/run/cri-dockerd.sock如果过期了,在master节点上执行以下命令可重新获得:

sudo kubeadm token create --print-join-command

查看集群状态:

# 查看所有pod

sudo kubectl get pods --all-namespaces

# 查看节点

sudo kubectl get node

# 查看所有组件

sudo kubectl get cs

1.3、添加pod网络组件

相关文档:

- 网络组件文档

- flannel组件

1.3.1、方法一:

(1)下载flannel网络组件定义文件到本地(复制文件内容粘贴到本地文件也可以)。

https://github.com/flannel-io/flannel/blob/master/Documentation/kube-flannel.yml

---

kind: Namespace

apiVersion: v1

metadata:name: kube-flannellabels:pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: flannel

rules:

- apiGroups:- ""resources:- podsverbs:- get

- apiGroups:- ""resources:- nodesverbs:- get- list- watch

- apiGroups:- ""resources:- nodes/statusverbs:- patch

- apiGroups:- "networking.k8s.io"resources:- clustercidrsverbs:- list- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: flannel

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: flannel

subjects:

- kind: ServiceAccountname: flannelnamespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:name: flannelnamespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:name: kube-flannel-cfgnamespace: kube-flannellabels:tier: nodeapp: flannel

data:cni-conf.json: |{"name": "cbr0","cniVersion": "0.3.1","plugins": [{"type": "flannel","delegate": {"hairpinMode": true,"isDefaultGateway": true}},{"type": "portmap","capabilities": {"portMappings": true}}]}net-conf.json: |{"Network": "10.244.0.0/16","Backend": {"Type": "vxlan"}}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:name: kube-flannel-dsnamespace: kube-flannellabels:tier: nodeapp: flannel

spec:selector:matchLabels:app: flanneltemplate:metadata:labels:tier: nodeapp: flannelspec:affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: kubernetes.io/osoperator: Invalues:- linuxhostNetwork: truepriorityClassName: system-node-criticaltolerations:- operator: Existseffect: NoScheduleserviceAccountName: flannelinitContainers:- name: install-cni-pluginimage: docker.io/flannel/flannel-cni-plugin:v1.1.2#image: docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.2command:- cpargs:- -f- /flannel- /opt/cni/bin/flannelvolumeMounts:- name: cni-pluginmountPath: /opt/cni/bin- name: install-cniimage: docker.io/flannel/flannel:v0.21.3#image: docker.io/rancher/mirrored-flannelcni-flannel:v0.21.3command:- cpargs:- -f- /etc/kube-flannel/cni-conf.json- /etc/cni/net.d/10-flannel.conflistvolumeMounts:- name: cnimountPath: /etc/cni/net.d- name: flannel-cfgmountPath: /etc/kube-flannel/containers:- name: kube-flannelimage: docker.io/flannel/flannel:v0.21.3#image: docker.io/rancher/mirrored-flannelcni-flannel:v0.21.3command:- /opt/bin/flanneldargs:- --ip-masq- --kube-subnet-mgrresources:requests:cpu: "100m"memory: "50Mi"securityContext:privileged: falsecapabilities:add: ["NET_ADMIN", "NET_RAW"]env:- name: POD_NAMEvalueFrom:fieldRef:fieldPath: metadata.name- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespace- name: EVENT_QUEUE_DEPTHvalue: "5000"volumeMounts:- name: runmountPath: /run/flannel- name: flannel-cfgmountPath: /etc/kube-flannel/- name: xtables-lockmountPath: /run/xtables.lockvolumes:- name: runhostPath:path: /run/flannel- name: cni-pluginhostPath:path: /opt/cni/bin- name: cnihostPath:path: /etc/cni/net.d- name: flannel-cfgconfigMap:name: kube-flannel-cfg- name: xtables-lockhostPath:path: /run/xtables.locktype: FileOrCreate

(2)应用资源。

kubectl apply -f kube-flannel.yml

创建了执行角色、运行账户、配置文件、守护进程:

fly@k8s-master1:~$ kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created1.3.2、方法二

应用网络组件,安装网络插件:

# 安装网络插件

kubectl apply -f https://raw.githubusercontent.com/flannel-io/flannel/master/Documentation/kube-flannel.yml

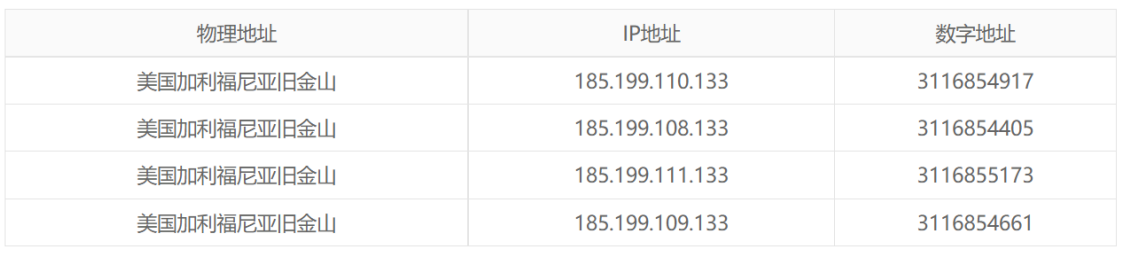

可能出现异常情况,域名无法解析。

异常情况解决:

(1)通过第三方IP查询网站,查询 raw.githubusercontent.com 域名所对应的IP地址

(2) 修改/etc/hosts 添加以下内容:

185.199.108.133 raw.githubusercontent.com

(3)再次执行网络插件安装。

kubectl apply -f https://raw.githubusercontent.com/flannel-io/flannel/master/Documentation/kube-flannel.yml

1.4、开启kube-proxy的ipvs模式

# 修改mod

kubectl edit -n kube-system cm kube-proxy

# 修改:mode: "ipvs"# 重启kube-proxy 守护进程

kubectl rollout restart -n kube-system daemonset kube-proxy

查看集群状态:

kubectl get pod --all-namespaces

# 或

kubectl get pod -A

到此,完成master节点的初始化。

若有pod状态不正常,可通过查看日志获取错误信息。

kubectl -n kube-system logs

二、Node节点初始化

2.1、环境安装

- 安装docker。

- 安装cri-dockerd 运行时,并启动服务。

- kubeadm、kubelet、kubectl等工具安装。

2.2、节点环境修改

- 修改docker的Cgroup Driver。

- 关闭防火墙。

- 禁用selinux。

- 禁用swap。

- 调整主机名(若需要)。

2.3、将节点添加到集群

在master节点上执行以下命令可获得:

sudo kubeadm token create --print-join-command

通过以上命令获得加入到集群的命令后,在命令上附加参数 --cri-socket unix:///var/run/cri-dockerd.sock。

三、重置节点

(1)重置命令:

sudo kubeadm reset

# 或

sudo kubeadm reset --cri-socket unix:///var/run/cri-dockerd.sock

(2)删除相关文件。

sudo rm -rf /var/lib/kubelet /var/lib/dockershim /var/run/kubernetes /var/lib/cni

/etc/cni/net.d $HOME/.kube/config

(3)ipvsadm --clear:

sudo ipvsadm --clear

(4)删除网络:

sudo ifconfig cni0 down

sudo ip link delete cni0

总结

Kubernetes集群配置有点多,但如果发现问题,基本上就出在配置、版本和机器资源上。比如我在做kubernetes初始化时,配置文件的等号(=)写出了恒等于(==),在初始化阶段一直找不到node;细节非常多。